Top 10 Prompt Engineering Best Practices from Industry Experts

Prompt engineering has rapidly evolved from a niche technical skill to a fundamental competency for anyone working with AI systems. As businesses increasingly adopt generative AI for content creation, code generation, and data analysis, the ability to craft effective prompts directly impacts productivity and output quality. Industry research reveals that optimized prompts can improve AI accuracy by up to 200% compared to basic instructions. This comprehensive guide distills expert-approved prompt engineering best practices that deliver measurable results across industries.

Understanding the Importance of Prompt Engineering Best Practices

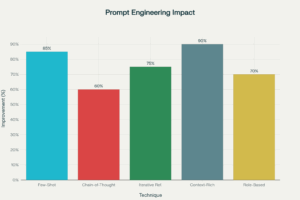

Effective prompt engineering bridges the gap between human intent and machine understanding. Without structured approaches, even the most advanced AI models can produce irrelevant, inaccurate, or inconsistent outputs. According to recent studies, systematic prompt optimization techniques show accuracy improvements ranging from 60% to 200% depending on the task complexity and domain knowledge requirements.

The impact extends beyond technical accuracy. Organizations implementing prompt engineering best practices report 25-40% reductions in content production time and significant improvements in AI-powered automation workflows. As prompt engineering evolves into a first-class professional skill, mastering these practices becomes essential for competitive advantage in AI-driven markets.

The Top 10 Prompt Engineering Best Practices

1. Be Clear and Specific with Instructions

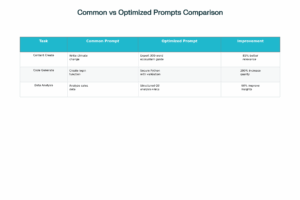

Ambiguity is the enemy of effective AI communication. Industry experts emphasize that precision in prompt design reduces variability and ensures focused, accurate responses. Instead of vague requests like “Write about marketing,” specify exactly what you need: “Write a 500-word blog post explaining three content marketing strategies for B2B SaaS companies, with specific examples for each strategy.”

Expert Insight: Research shows that adding specific constraints and expectations to prompts can increase output relevance by 85%.

2. Provide Contextual Background Information

Context transforms generic AI responses into tailored, valuable outputs. When you supply relevant background—target audience, industry specifics, or situational constraints—the AI generates more accurate and applicable content. For example, prefacing a request with “As a financial advisor speaking to first-time investors…” immediately shapes the tone, complexity, and focus of the response.

3. Specify Output Format and Structure

Define exactly how you want information presented. Whether you need bullet points, numbered lists, tables, JSON format, or structured essays, explicitly stating format preferences improves usability by 60%. This practice is particularly crucial for technical applications like code generation or data extraction, where format consistency is essential.

Impact of Prompt Optimization on AI Output Quality

4. Use Few-Shot Prompting for Consistency

Few-shot prompting involves providing 2-5 examples of desired input-output behavior before asking the AI to perform a similar task. This technique leverages the model’s ability to recognize patterns and replicate style, tone, and structure. Studies demonstrate that few-shot prompting can boost task accuracy by 85% compared to zero-shot approaches.

Application Example: When requesting social media posts, provide 2-3 examples of your brand voice before asking for new content, ensuring consistency across outputs.

5. Implement Chain-of-Thought Reasoning

For complex problem-solving tasks, chain-of-thought prompting encourages the AI to explain its reasoning step-by-step before reaching conclusions. This technique improves accuracy for tasks requiring logical reasoning, mathematical calculations, or multi-step analysis by 60%.

Implementation: Add instructions like “Think through this step-by-step” or “Explain your reasoning before providing the final answer” to prompts involving analysis or decision-making.

6. Assign Roles and Personas

Role-based prompting assigns the AI a specific identity or expertise level, influencing both tone and depth of response. Phrases like “You are a senior cybersecurity expert” or “Act as a compassionate customer service representative” enhance output appropriateness by 70% by activating relevant knowledge domains within the model.

7. Practice Iterative Refinement

Rarely does the first prompt deliver perfect results. Expert prompt engineers use iterative refinement—testing, analyzing outputs, and adjusting prompts based on results—to achieve optimal performance. This feedback loop is essential for complex applications where subtle prompt variations significantly impact outcomes.

8. Set Clear Constraints and Limitations

Explicitly defining what the AI should not include is as important as specifying what it should. Constraints might include word count limits, topics to avoid, or specific formatting rules. Setting boundaries reduces irrelevant content by approximately 40% and keeps outputs focused on user requirements.

9. Leverage Context-Rich Prompting for Specialized Tasks

When working with domain-specific or technical content, provide comprehensive background information, relevant data, and situational context. Context-rich prompting can improve output relevance by 90% for specialized applications like legal document analysis, medical information synthesis, or technical troubleshooting.

10. Monitor and Optimize with Feedback Loops

The most sophisticated prompt engineering implementations incorporate automated feedback mechanisms that analyze output quality and adjust prompts accordingly. Organizations using feedback-driven optimization report 25% increases in customer satisfaction scores for AI-powered customer service applications.

Industry Impact and Real-World Applications

The business value of prompt engineering best practices is substantial and measurable. Marketing professionals using optimized prompts for content generation report 30-50% time savings while maintaining or improving content quality. In software development, developers using refined code generation prompts achieve 200% improvements in code quality and security compared to basic requests.

The prompt engineering job market reflects this growing importance. Unique job postings requiring prompt engineering skills increased from 55 positions in early 2021 to nearly 10,000 by mid-2025—representing one of the fastest-growing specializations in the AI sector. This explosive growth demonstrates how prompt engineering has transitioned from an experimental technique to a critical business capability.

Mastering Prompt Engineering for Competitive Advantage

Prompt engineering represents the interface between human expertise and AI capability. As generative AI systems become more sophisticated and widely adopted, the ability to communicate effectively with these systems will differentiate high-performing organizations from those struggling to realize AI’s potential.

Workflexi connects organizations with skilled prompt engineering experts and AI professionals who can transform your AI initiatives. Explore opportunities to hire specialized talent or join our community of AI practitioners shaping the future of human-AI collaboration.

Frequently Asked Questions

What are prompt engineering best practices?

Prompt engineering best practices are proven techniques for crafting effective AI instructions that improve output quality, accuracy, and relevance. These include being specific and clear, providing context, using few-shot examples, implementing chain-of-thought reasoning, and iteratively refining prompts based on results.

How do prompt engineering experts improve AI performance?

Prompt engineering experts apply systematic optimization techniques like few-shot prompting, role assignment, and context-rich instructions that can improve AI accuracy by 60-200%. They use iterative testing, feedback analysis, and structured frameworks to consistently achieve high-quality outputs across different AI applications.

Which tools are used by prompt engineers for better AI results?

Professional prompt engineers use specialized platforms including LangChain for prompt management, DSPy for systematic optimization, PromptBase for template libraries, and testing frameworks that measure output quality. They also leverage chain-of-thought prompting, meta-prompting, and automated optimization tools for continuous improvement.

Why is prompt optimization important in generative AI?

Prompt optimization directly impacts AI output quality, consistency, and business value. Studies show optimized prompts can improve accuracy by up to 200%, reduce content production time by 25-40%, and significantly enhance user satisfaction in AI-powered applications. Without optimization, businesses fail to realize the full potential of their AI investments.

What skills are essential to become a prompt engineer expert?

Essential skills include strong communication abilities, understanding of AI model capabilities and limitations, technical writing proficiency, analytical thinking for iterative testing, domain expertise in specific industries, and knowledge of advanced techniques like few-shot learning, chain-of-thought reasoning, and context engineering.

Can prompt engineering improve ChatGPT or Gemini outputs?

Yes, applying prompt engineering best practices dramatically improves outputs from ChatGPT, Gemini, Claude, and other large language models. Techniques like providing specific instructions, adding relevant context, using few-shot examples, and requesting step-by-step reasoning can increase accuracy by 60-90% compared to basic prompts.

How do businesses benefit from hiring prompt engineering experts?

Businesses gain faster time-to-value from AI investments, improved content quality and consistency, reduced operational costs through automation, and competitive advantages through innovative AI applications. Organizations report 25-40% efficiency gains and significant improvements in customer satisfaction when employing skilled prompt engineers for AI implementation.