Top 10 Tools and Frameworks Every Machine Learning Expert Should Know

Why ML Tools and Frameworks Matter?

The machine learning landscape has evolved dramatically, with 84% of developers now using or planning to use AI tools in their workflows. For machine learning experts, selecting the right tools and frameworks can mean the difference between rapid innovation and frustrating development bottlenecks. These frameworks provide pre-built architectures, optimized algorithms, and streamlined workflows that accelerate the journey from concept to deployment.

With the global machine learning market projected to reach $113.10 billion in 2025 and expand to $503.40 billion by 2030, the tools powering this revolution have become increasingly sophisticated. Whether you’re building deep learning models for computer vision, training natural language processors, or deploying production-ready AI systems, understanding the strengths and use cases of leading frameworks is essential for every machine learning expert.

The Top 10 Tools and Frameworks for Machine Learning Experts

1. TensorFlow: Enterprise-Grade Production Power

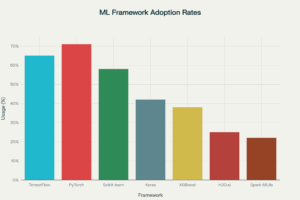

TensorFlow remains one of the most comprehensive machine learning frameworks backed by Google’s extensive resources and community support. With approximately 65% of machine learning professionals using TensorFlow, it continues to dominate enterprise and production environments.

Core Features:

- Comprehensive ecosystem supporting deployment across mobile (TensorFlow Lite), web (TensorFlow.js), and cloud platforms

- TensorBoard for powerful visualization and monitoring

- Production-ready with TensorFlow Serving for scalable model deployment

- Support for distributed training across multiple GPUs and TPUs

Why Machine Learning Experts Choose It?

TensorFlow excels in production deployments where scalability, performance, and cross-platform compatibility are critical. Its static computation graph approach (with eager execution option) provides excellent optimization for large-scale applications.

Real-World Applications:

Google uses TensorFlow extensively across its products, including search algorithms, YouTube recommendations, and Google Photos. Major companies like Airbnb, Coca-Cola, and Twitter leverage TensorFlow for recommendation systems, predictive analytics, and computer vision applications.

2. PyTorch: The Research and Experimentation Leader

PyTorch has emerged as the framework of choice for researchers and machine learning experts who prioritize flexibility and rapid prototyping, with 71% of developers finding it easier to use than TensorFlow.

Core Features:

- Dynamic computation graphs enabling on-the-fly model modifications

- Pythonic syntax that integrates seamlessly with standard Python debugging tools

- Native integration with Hugging Face Transformers for state-of-the-art NLP

- Strong support for academic research and custom neural network architectures

Why Machine Learning Experts Prefer It?

PyTorch’s intuitive design allows immediate error reporting during execution, making debugging significantly easier. The framework’s dynamic nature makes it ideal for experimental projects where model architectures need frequent adjustments.

Real-World Applications:

Meta (Facebook) built PyTorch and uses it extensively across its AI infrastructure. Research institutions worldwide have adopted PyTorch, making it the dominant framework in academic AI publications. Companies like Tesla and Uber leverage PyTorch for autonomous vehicle development and real-time decision systems.

3. Scikit-learn: Classical Machine Learning Simplified

Scikit-learn provides machine learning experts with efficient implementations of traditional ML algorithms, making it the go-to framework for structured data problems and classical machine learning tasks.

Core Features:

- Comprehensive suite of algorithms for classification, regression, and clustering

- Consistent API design that simplifies learning and implementation

- Excellent documentation and extensive tutorial resources

- Built on NumPy, SciPy, and Matplotlib for seamless Python integration

Why It Remains Essential?

Despite the deep learning revolution, approximately 58% of machine learning professionals still rely on Scikit-learn for traditional ML tasks. Its simplicity and effectiveness for tabular data analysis make it indispensable.

Real-World Applications:

Financial institutions use Scikit-learn for credit scoring and fraud detection, while healthcare organizations apply it to patient risk stratification and diagnostic support systems. The framework excels in scenarios where interpretability and transparency are crucial.

4. Keras: High-Level Deep Learning Simplicity

Keras serves as a high-level API that runs on top of TensorFlow, designed specifically for rapid experimentation and prototyping neural networks with minimal code.

Core Features:

- User-friendly interface that abstracts complex implementation details

- Modular architecture allowing easy assembly of neural network layers

- Support for multiple backends (primarily TensorFlow)

- Excellent for educational purposes and quick proof-of-concepts

Why Machine Learning Experts Use It?

Keras dramatically reduces the code required to build and train deep learning models, with some implementations requiring 70% less code than raw TensorFlow. This efficiency makes it ideal for fast iteration and testing.

Real-World Applications:

Startups and research teams use Keras for rapid prototyping before transitioning to production-optimized frameworks. It’s particularly popular in academic settings for teaching deep learning concepts.

5. Apache Spark MLlib: Big Data Machine Learning

Apache Spark MLlib provides distributed machine learning capabilities designed for processing massive datasets that don’t fit in memory on a single machine.

Core Features:

- Distributed computing architecture for handling petabyte-scale data

- Scalable implementations of classification, regression, clustering, and collaborative filtering

- Seamless integration with Spark’s ecosystem for data processing

- Support for multiple programming languages including Python, Scala, and Java

Why It’s Critical for Large-Scale ML?

Organizations dealing with big data scenarios rely on MLlib’s ability to distribute computation across clusters, enabling machine learning on datasets that would be impossible to process otherwise.

Real-World Applications:

Tech giants like Netflix and Spotify use Spark MLlib for recommendation systems that process billions of user interactions. E-commerce platforms leverage it for real-time personalization at scale.

6. JAX: High-Performance Numerical Computing

JAX represents the cutting edge of machine learning frameworks, offering automatic differentiation and GPU/TPU compilation for numerical computing.

Core Features:

- NumPy-like API with automatic differentiation capabilities

- Just-in-time compilation for maximum performance

- Composable function transformations for advanced ML research

- Excellent for custom gradient-based optimization algorithms

Why Advanced ML Experts Choose It?

JAX provides the flexibility to implement novel algorithms while achieving performance comparable to highly optimized C++ code. It’s becoming increasingly popular for research requiring custom operations.

7. H2O.ai: AutoML and Enterprise Solutions

H2O.ai focuses on democratizing machine learning through automated machine learning (AutoML) capabilities and enterprise-ready tools.

Core Features:

- Automated model selection, hyperparameter tuning, and feature engineering

- Support for distributed computing across clusters

- Integration with popular data science tools and platforms

- Both open-source and enterprise versions available

Why Organizations Adopt It?

H2O.ai reduces the expertise required to build effective ML models, with AutoML features that can match or exceed manually tuned models. This makes machine learning accessible to broader teams.

Real-World Applications:

Financial services firms use H2O.ai for risk modeling and fraud detection, while healthcare organizations apply it to patient outcome prediction and resource optimization.

8. MXNet: Scalable Deep Learning

MXNet provides efficient deep learning capabilities with strong support for distributed training across multiple machines and accelerators.

Core Features:

- Support for multiple programming languages including Python, R, Julia, and Scala

- Efficient memory usage for training large models

- Dynamic and static computation graph options

- Strong integration with AWS through Apache MXNet on AWS

Why It Matters:

MXNet’s scalability and efficiency make it particularly valuable for organizations requiring multi-language support and cloud deployment flexibility.

9. RapidMiner: Visual Machine Learning Platform

RapidMiner offers a visual programming interface for building machine learning workflows without extensive coding.

Core Features:

- Drag-and-drop interface for designing ML pipelines

- Comprehensive data preparation and transformation tools

- Built-in visualization and reporting capabilities

- Integration with Python and R for custom operations

Why It’s Valuable?

RapidMiner enables domain experts with limited programming experience to build and deploy machine learning models, expanding ML capabilities across organizations.

10. Google Cloud AI Platform: End-to-End ML Operations

Google Cloud AI Platform (now Vertex AI) provides comprehensive tools for the entire machine learning lifecycle, from data preparation through model deployment and monitoring.

Core Features:

- Managed infrastructure for training and deploying models at scale

- AutoML capabilities for automated model development

- Integration with TensorFlow, PyTorch, and other popular frameworks

- MLOps tools for continuous training and monitoring

Why Enterprise ML Teams Choose It?

The platform eliminates infrastructure management overhead, allowing machine learning experts to focus on model development rather than deployment logistics.

How to Choose the Right Tool for Your Machine Learning Projects?

Selecting the appropriate machine learning tool depends on several critical factors:

For Beginners: Start with Scikit-learn for traditional machine learning concepts, then progress to Keras for deep learning. These frameworks provide gentle learning curves with excellent documentation and community support.

For Research and Experimentation: PyTorch dominates academic research with its flexible, intuitive approach. Its dynamic computation graphs make it ideal for trying novel architectures and custom operations.

For Production Deployment: TensorFlow offers superior tools for deploying models across diverse platforms including mobile devices, web applications, and cloud infrastructure. Its mature ecosystem provides battle-tested solutions for scale.

For Big Data Scenarios: Apache Spark MLlib becomes essential when datasets exceed single-machine memory capacity. Its distributed architecture handles petabyte-scale data processing.

For Business Users: H2O.ai and RapidMiner provide accessible entry points through AutoML and visual interfaces, democratizing machine learning for domain experts without extensive coding backgrounds.

For Structured Data and Competitions: XGBoost consistently delivers exceptional performance on tabular datasets and remains a top choice for Kaggle competitions and production systems requiring maximum accuracy on structured data.

Staying Current in a Rapidly Evolving Field

The frameworks highlighted here represent the current state-of-the-art, but staying informed about emerging tools remains essential. As 78% of organizations now use AI in at least one business function, the demand for skilled machine learning experts proficient in these tools will only intensify.

Ready to connect with machine learning experts who can leverage these powerful tools to transform your business? Visit Workflexi today to find skilled ML professionals who bring both technical expertise and practical experience across all major frameworks and platforms.

Frequently Asked Questions

What are the most popular machine learning tools used today?

TensorFlow and PyTorch are the most widely adopted frameworks, with 65% and 71% developer usage respectively. Scikit-learn remains popular for classical ML at 58% adoption, while Keras, XGBoost, and cloud platforms like Google Cloud AI also see significant use across industries.

Which framework is best for beginners in machine learning?

Scikit-learn is ideal for beginners learning traditional machine learning, offering simple APIs and excellent documentation. For deep learning, Keras provides the easiest entry point with minimal code requirements and intuitive design. Both have extensive tutorials and supportive communities.

Why do machine learning experts prefer TensorFlow or PyTorch?

Experts choose PyTorch for research and experimentation due to its dynamic computation graphs and Pythonic style that simplifies debugging. TensorFlow is preferred for production deployments because of its mature ecosystem, superior deployment tools (TF Lite, TF Serving), and excellent scalability across platforms.

What is the difference between machine learning frameworks and libraries?

Frameworks provide comprehensive ecosystems for building, training, and deploying models with opinionated architectures (like TensorFlow and PyTorch). Libraries are more focused tools offering specific functionality, such as Scikit-learn for algorithms or NumPy for numerical computing, typically used as components within larger projects.

How do ML experts choose between open-source and paid ML tools?

Machine learning experts typically start with open-source tools (TensorFlow, PyTorch, Scikit-learn) for core development, then evaluate paid platforms when requiring enterprise features like managed infrastructure, automated MLOps, dedicated support, or advanced AutoML capabilities that justify the investment.

Are cloud-based machine learning tools better for scalability?

Yes, cloud platforms like Google Cloud AI, AWS SageMaker, and Azure ML excel at scalability by providing managed infrastructure, distributed training, and elastic resources. They’re particularly valuable for organizations lacking on-premise GPU clusters or requiring rapid scaling without infrastructure management overhead.

How can beginners start using these frameworks effectively?

Beginners should start with Scikit-learn for traditional ML fundamentals, then progress to Keras for deep learning basics. Focus on completing small projects, following official tutorials, participating in Kaggle competitions, and gradually exploring PyTorch or TensorFlow based on career interests. Consistent hands-on practice is more valuable than trying to learn everything simultaneously.